After retrieving the various stocks information from yahoo finance etc with tools described in the previous blog post, it is more meaningful to filter stocks that meet certain requirements much like the functionality of the Google stocks screener.

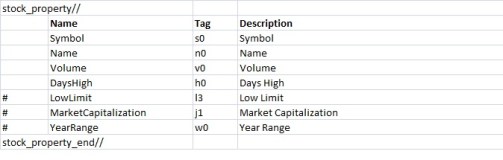

The script (avaliable in GitHub) will take in a text file with the criteria specified and filter them using python Pandas. The text file is in the format such that users can easily input and retrieve the criteria description using the DictParser module described in the following blog post. In addition, the DictParser module make it easy to create the respective criteria. A sample of a particular criteria file is as below.

$greater Volume:999999 PERATIO:4 Current Ratio (mrq):1.5 Qtrly Earnings Growth (yoy):0 DilutedEPS:0 $less PERATIO:17 Mean Recommendation (this week):3 $compare 1:YEARHIGH,OPEN,greater,0

The DictParser object will get 3 dict based on above criteria text file. These are criteria that will filter the stocks that meet the listed requirements. The stock data after retrieved (in the form of .csv) are converted to Pandas Dataframe object for easy filtering and the stocks eventually selected will match all the criteria within each criteria file.

Under the ‘greater’ dict, each of the key value pair mean that only stocks that have the key (eg Volume) greater than the value (eg 999999) will be selected. Under the “less” dict, only stocks that have key less than the corresponding value will be selected. For the “compare” dict, it will not make use of the key but utilize the value (list) for each key.

Inside the value list of the “compare”, there will be 4 items. It will compare the first to second item with 3rd item as comparator and last item as the value. For example, the phrase “YEARHIGH,OPEN,greater,0” will scan stock that has “YearHigh” price greater than “open” price by at least 0 which indicates all stocks will be selected based on this particular criteria.

Users can easily add or delete criteria by conforming to the format. The script allows several criteria files to be run at one go so users can create multiple criteria files with each catering to different risk appetite as in the case of stocks. Below is part of the script that show getting the different criteria dicts using the DictParser and using the dict to filter the data.

def get_all_criteria_fr_file(self):

""" Created in format of the dictparser.

Dict parser will contain the greater, less than ,sorting dicts for easy filtering.

Will parse according to the self.criteria_type

Will also set the output file name

"""

self.dictparser = DictParser(self.criteria_type_path_dict[self.criteria_type])

self.criteria_dict = self.dictparser.dict_of_dict_obj

self.modified_df = self.data_df

self.set_output_file()

def process_criteria(self):

""" Process the different criteria generated.

Present only have more and less

"""

greater_dict = dict()

less_dict = dict()

compare_dict = dict()

print 'Processing each filter...'

print '-'*40

if self.criteria_dict.has_key('greater'): greater_dict = self.criteria_dict['greater']

if self.criteria_dict.has_key('less'): less_dict = self.criteria_dict['less']

if self.criteria_dict.has_key('compare'): compare_dict = self.criteria_dict['compare']

for n in greater_dict.keys():

if not n in self.modified_df.columns: continue #continue if criteria not found

self.modified_df = self.modified_df[self.modified_df[n] > float(greater_dict[n][0])]

if self.print_qty_left_aft_screen:

self.__print_criteria_info('Greater', n)

self.__print_modified_df_qty()

for n in less_dict.keys():

if not n in self.modified_df.columns: continue #continue if criteria not found

self.modified_df = self.modified_df[self.modified_df[n] < float(less_dict[n][0])]

if self.print_qty_left_aft_screen:

self.__print_criteria_info('Less',n)

self.__print_modified_df_qty()

for n in compare_dict.keys():

first_item = compare_dict[n][0]

sec_item = compare_dict[n][1]

compare_type = compare_dict[n][2]

compare_value = float(compare_dict[n][3])

if not first_item in self.modified_df.columns: continue #continue if criteria not found

if not sec_item in self.modified_df.columns: continue #continue if criteria not found

if compare_type == 'greater':

self.modified_df = self.modified_df[(self.modified_df[first_item] - self.modified_df[sec_item])> compare_value]

elif compare_type == 'less':

self.modified_df = self.modified_df[(self.modified_df[first_item] - self.modified_df[sec_item])< compare_value]

if self.print_qty_left_aft_screen:

self.__print_criteria_info('Compare',first_item, sec_item)

self.__print_modified_df_qty()

print 'END'

print '\nSnapshot of final df ...'

self.__print_snapshot_of_modified_df()

Sample output from one of the criteria is as shown below. It try to screen out stocks that provide high dividend and yet have a good fundamental (only basic parameters are listed below). The modified_df_qty will show the number of stocks left after each criteria.

List of filter for the criteria: dividend

—————————————-

VOLUME > 999999

Qtrly Earnings Growth (yoy) > 0

DILUTEDEPS > 0

DAYSLOW > 1.1

TRAILINGANNUALDIVIDENDYIELDINPERCENT > 4

PERATIO < 15

TrailingAnnualDividendYieldInPercent < 10Processing each filter…

—————————————-

Current Screen criteria: Greater VOLUME

Modified_df qty: 53

Current Screen criteria: Greater Qtrly Earnings Growth (yoy)

Modified_df qty: 48

Current Screen criteria: Greater DILUTEDEPS

Modified_df qty: 48

Current Screen criteria: Greater DAYSLOW

Modified_df qty: 24

Current Screen criteria: Greater TRAILINGANNUALDIVIDENDYIELDINPERCENT

Modified_df qty: 5

Current Screen criteria: Less PERATIO

Modified_df qty: 4

ENDSnapshot of final df …

Unnamed: 0 SYMBOL NAME LASTTRADEDATE OPEN \

17 4 O39.SI OCBC Bank 10/3/2014 9.680

21 8 BN4.SI Keppel Corp 10/3/2014 10.380

37 5 C38U.SI CapitaMall Trust 10/3/2014 1.925

164 14 U11.SI UOB 10/3/2014 22.300PREVIOUSCLOSE LASTTRADEPRICEONLY VOLUME AVERAGEDAILYVOLUME DAYSHIGH \

17 9.710 9.740 3322000 4555330 9.750

21 10.440 10.400 4280000 2384510 10.410

37 1.925 1.925 5063000 7397900 1.935

164 22.270 22.440 1381000 1851720 22.470… Mean Recommendation (last week) \

17 … 2.6

21 … 2.1

37 … 2.5

164 … 2.8Change Mean Target Median Target \

17 0.0 10.53 10.63

21 <font color=”#cc0000″>-0.1</font> 12.26 12.50

37 0.1 2.14 2.14

164 0.0 24.03 23.60High Target Low Target No. of Brokers Sector \

17 12.23 7.96 22 Financial

21 13.50 10.00 23 Industrial Goods

37 2.40 1.92 21 Financial

164 26.80 22.00 23 FinancialIndustry company_desc

17 Money Center Banks Oversea-Chinese Banking Corporation Limited of…

21 General Contractors Keppel Corporation Limited primarily engages i…

37 REIT – Retail CapitaMall Trust (CMT) is a publicly owned rea…

164 Money Center Banks United Overseas Bank Limited provides various …[4 rows x 70 columns]